This is a set of incomplete notes for me to organize my understanding of the basic elements of convex duality. The aim is to have a rather linear presentation of the facts, without too many branches obfuscating the flow of logic.

Convexity

Convexity is a rather clean property. A function \(f : \R^n \to \Ri\) (we define \(\Ri = \R \cup \{+\infty\}\), it will be useful to allow positive infinite values) is convex is for any two points \(x, y \in \R^n\) and any \(\t \in [0, 1]\), we have

It says that averaging the input at most averages the output, or to put in a more common fashion, the function lies below the chord connecting any two points on its graph. As expected, there is a strong connection between convex functions and convex sets. For the sake of completeness, we recall that a set \(K \subseteq \R^n\) is convex if for any two points \(x, y \in K\) and any $\t \in [0, 1]$, we have

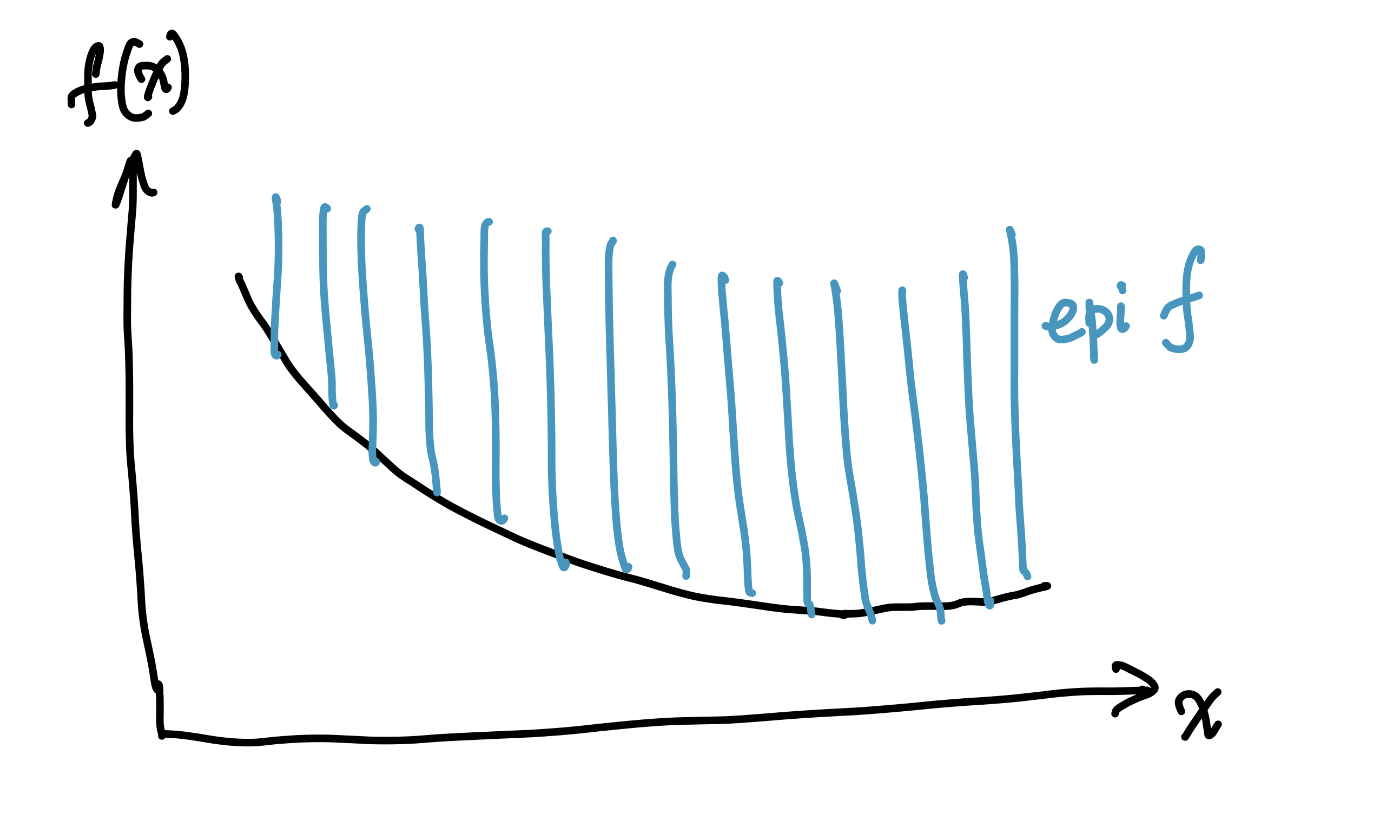

In this language, the convexity of a function \(f\) is equivalent to the convexity, as a set, of its epigraph ('epi' = 'above'):

Note that \(s\) is not allowed to be \(+\infty\) here, and thus it is possible, but certainly pathological, to have \(\epi f = \empty\), which happens only if \(f \equiv +\infty\).

The epigraph of a function is a convex set if and only if the function is convex.

Is there a reverse connection, associating a convex function to a convex set? Yes! Suppose $K \sse \R^n$ is a convex set. Then the function (which will be useful below as the penalty for violating membership in \(K\)):

is convex (it is here that \(+\infty\) values are useful). In this context, it is also helpful to define the domain of \(f\) as

Note that \(\dom \del_K = K\) for any convex set \(K\). In general, \(\dom f\) is convex for any convex \(f\), as may be seen by a simple argument.

Two related facts about convex functions and sets are worth noting:

- arbitrary intersections of convex sets are convex, which implies

- arbitrary pointwise supremum of convex functions is convex.

The beginnings of duality

Perhaps the simplest convex sets are halfspaces, sets of the form

These are also very simple to describe, just specify a "slope" vector \(\lam\) and "intercept" \(t\).

However, these are not merely simple convex functions, but they also play a key role in theory of convexity. Morally,

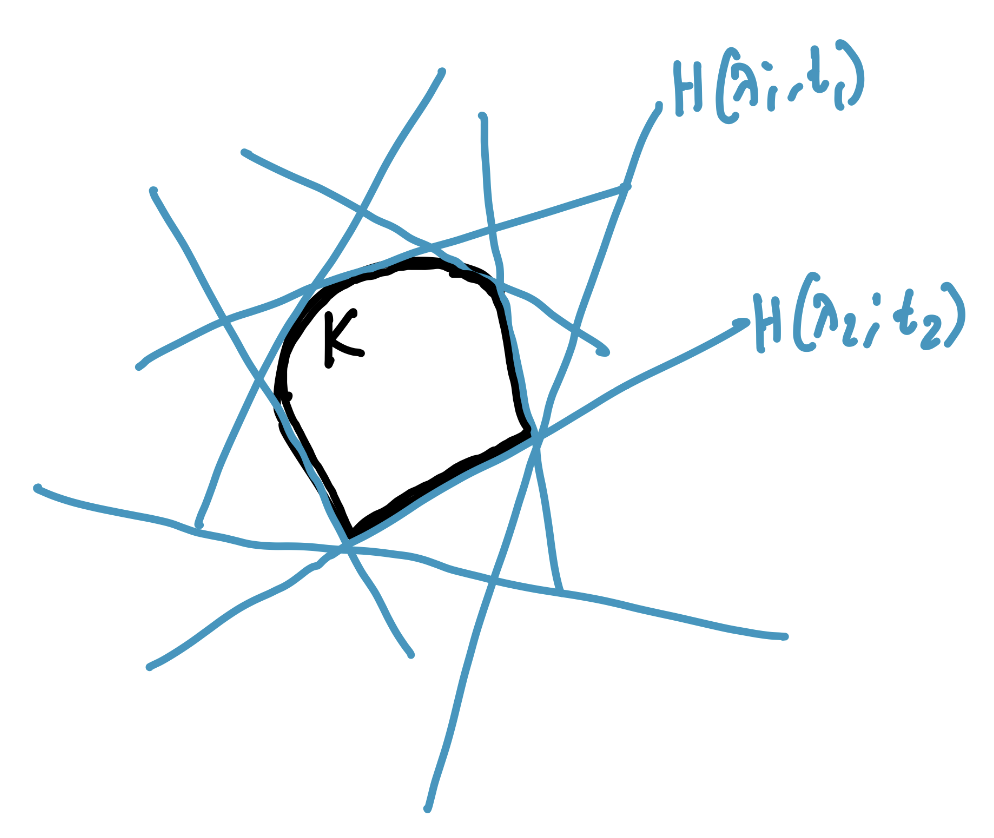

a convex set is the intersection of halfspaces containing it, i.e.,

$$ K = \bigcap_{\lam, t : K \subseteq H(\lam; t)} H(\lam; t). $$

A bunch of halfspaces containing a convex set \(K\). All of them together will intersect to \(K\).

It is this fact that underlies convex duality. But it is not true for all convex sets, but only because our halfspaces are closed (topologically), and intersections of closed sets are closed. So, we must restrict to closed convex sets, and indeed, it is true that a closed convex set is the intersection of halfspaces containing it. Of course, the side \(K \sse ...\) is easy, and the real content is the reverse inclusion, for which we need the following deep separating hyperplane theorem, one of the cornerstones of convex analysis:

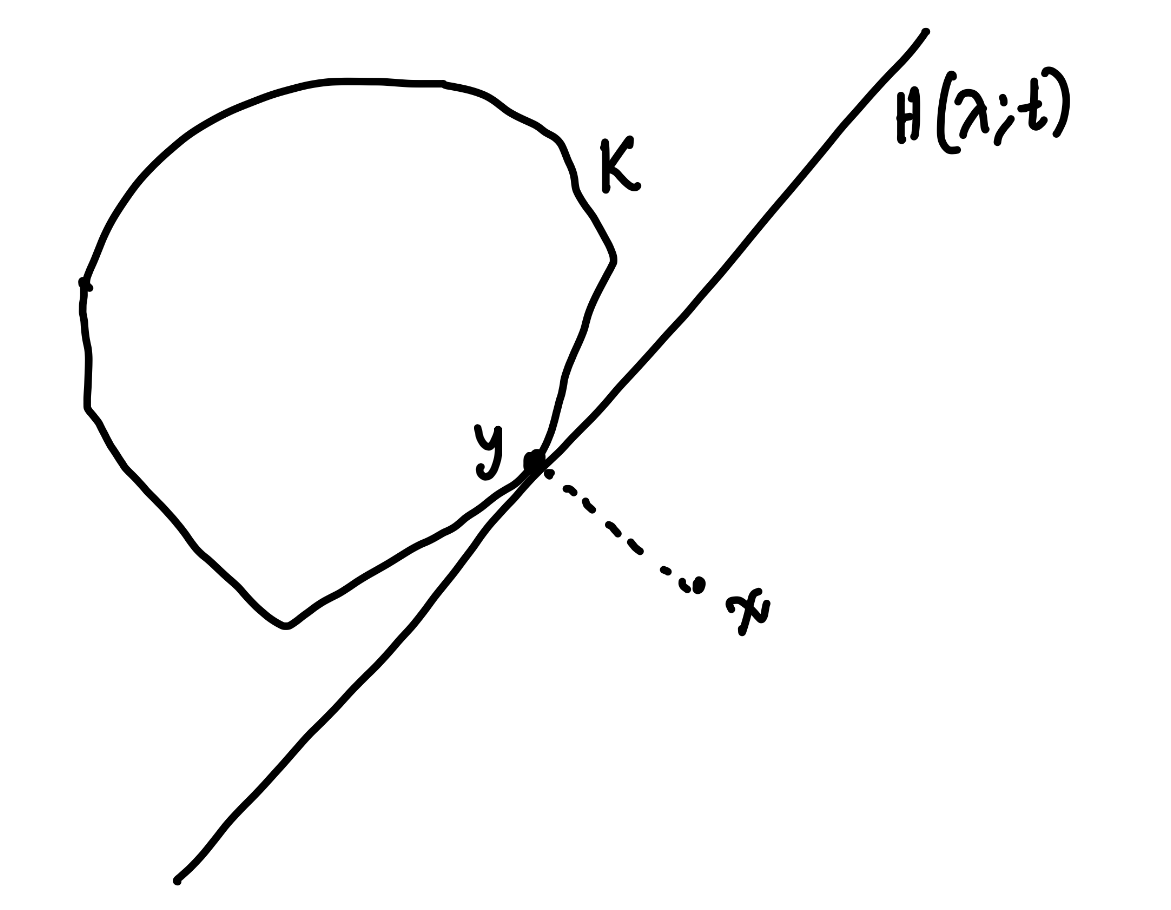

For any closed convex set \(K\) and any point \(x \not\in K\), there is a halfspace containing \(K\) but not \(x\).

Illustration of the separating hyperplane theorem.

The fact above immediately implies that if \(x \notin K\), there is some halfspace \(H(\lam; t)\) containing \(K\) but not \(x\), and thus \(x\) is not in the intersection of all halfspaces containing \(K\), i.e.,

All this justifies the refined statement

A closed convex set is the intersection of halfspaces containing it.

Return to convex functions

What sort of implications does the above have for convex functions \(f\)? Let us use the connection between convex functions and convex sets, and apply the above to \(\epi f\). If \(\epi f\) is closed, we can write

Let us simplify the condition \(\epi f \subseteq H(\lam; t)\). Note that since \(\epi f \sse \R^n \times \R\), we are talking about \(\tlam \in \R^{n + 1}\) (this is why we use \(\tlam\) instead of \(\lam\), reserving the latter for vectors on \(\R^n\)). For a fixed \((x, s) \in \epi f\), the condition is

where we adopt the notation \(\tlam = (\tlam_x, \tlam_s)\) with \(\tlam_x \in \R^n\) (the first \(n\) coordinates) and \(\tlam_s \in \R\) (the last coordinate). Note that for a fixed "slope" \(\tlam\), the intersection of all halfspaces containing \(\epi f\) is the halfspace with the "tightest" intercept \(t\), i.e., the smallest \(t\) satisfying the condition above, i.e.,

It will be useful to make our halfspaces a bit more specific. In particular, let us use the fact that if \(c > 0\), then \(H(\tlam; t) = H(c \tlam; ct)\), and so we can always assume that \(\tlam_s \in \{-1, 0, 1\}\). We now deal with these three cases separately.

We can summarize the conclusions of the analysis above as follows:

Assume \(f\) is not identically \(+\infty\), so \(\epi f\) is not empty. Define the support function of a convex set \(K\) as

$$ \sigma_K(\lam) = \sup_{x \in K}\; \Inn{\lam, x}, $$and the convex conjugate, also called the Fenchel conjugate or Fenchel dual, of a function \(f\) as

$$ f^\st(\lam) = \sup_{x \in \R^n}\; \Inn{\lam, x} - f(x). $$Then, if \(\epi f\) is closed, we have

$$ \epi f = (A \times \R) \cap B $$where

$$ \begin{aligned} A &= \bigcap_{\lam} \{ x : \Inn{\lam, x} \leq \sigma_{\dom f}(\lam) \} \sse \R^n, \\ B &= \bigcap_{\lam} \{ (x, s) : \Inn{\lam, x} - f^\st(\lam) \leq s \} \sse \R^{n+1}. \end{aligned} $$

In fact, set \(A\) can be simplified since it is the intersection of all halfspaces containing \(\dom f\), and thus must equal \(\dom f\) if \(\dom f\) is closed. Unfortunately, it is not necessary that \(\dom f\) is closed, even if you assume \(\epi f\) is closed, see the example in the fold below.

Therefore, in general, we cannot simplify \(A\) to \(\dom f\). However, \(A\) is closed by definition, and \(\dom f \sse A\), and thus \(\cl{\dom f} \sse A\), where \(\cl{S}\) is the topological closure of a set \(S\). Further, the following fact is true:

If \(K\) is convex, then so is \(\cl{K}\).

Therefore \(\cl{\dom f}\) is closed convex. Intersections of all halfspaces containing \(\cl{\dom f}\) is thus:

- on one hand, \(\cl{\dom f}\) itself, since \(\cl{\dom f}\) is closed convex.

- on the other hand, contains \(A\) (which is the intersection of all halfspaces containing \(\dom f\)) since any halfspace containing \(\cl{\dom f}\) must contain \(\dom f\) as well.

The same argument yields the following generalization of our earlier statement about closed convex sets:

The intersection of all halfspaces containing a convex set \(K\) is the closure \(\cl{K}\) of \(K\).

In particular, \(A = \cl{\dom f}\), and thus, succinctly, if \(\epi f\) is closed, we have

Now, fix a point \(x_0 \in \dom f\), and let us try to evaluate \(f(x_0)\). From the definition of \(\epi f\), it is clear that

Since \(x_0 \in \dom f\), we have \((x_0, s) \in \cl{\dom f} \times \R\) for all \(s\), and thus

A minute's thought reveals that then

which, recalling the definition of the Fenchel dual, relates \(f\) to its double dual, i.e.,

We conclude

If \(\epi f\) is closed and \(f\) is not identically \(+\infty\), then \(f = f^{\st\st}\) on \(\dom f\).

It is rather common to identify the set of conditions (a) \(f\) is convex, (b) \(\epi f\) is closed, and (c) \(f\) is not identically \(+\infty\) as the condition that \(f\) is proper closed convex.

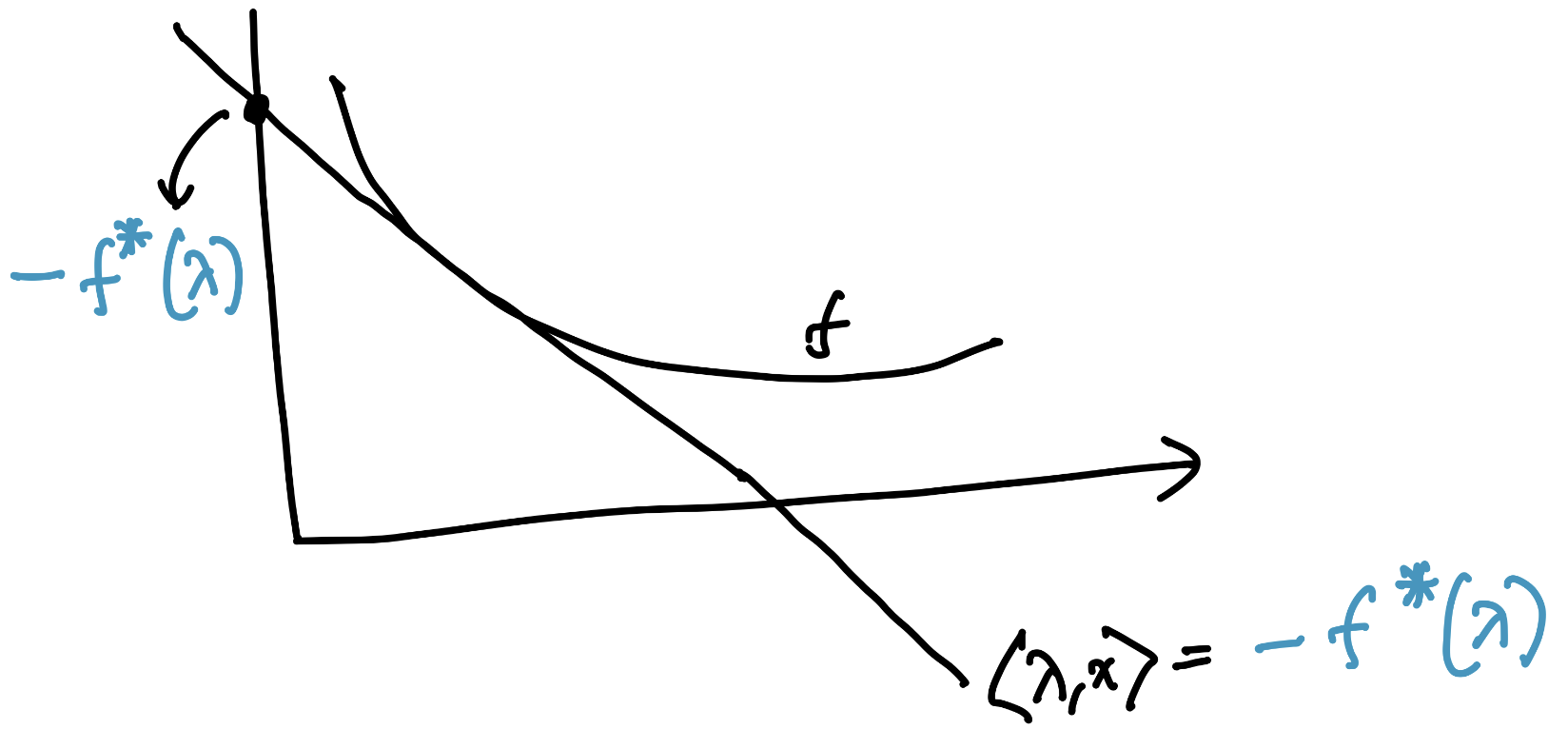

Illustration of the Fenchel dual of a function \(f\). The negative sign on the intercept \(-f^\st(\lam)\) is purely due to convention, but the core purpose of the dual is to encode efficiently the best possible halfspaces containing \(\epi f\).

Let us take a moment to understand what we have done. The Fenchel dual \(f^\st(\lam)\) encodes the tightest intercept \(t\) (i.e., the smallest, since our halfspaces are of "type" \(\leq t\)) such that the halfspace \(H(\lam; t)\) contains \(\epi f\), that is:

This corresponds to a \(\lam\)-slope supporting hyperplane of \(f\). It is useful to note that \(f^\st\) is convex in \(\lam\), simply because it is a supremum of linear (and thus convex) functions of \(\lam\).

The double dual simply says that \(f\) can be recovered by taking the supremum of all the supporting hyperplanes of \(f\)!

An example

To see this result in action, let us consider a somewhat weird example:

It is not hard to check that this is convex, but not strictly so, due to the flatness at 0.

Let us compute the Fenchel dual \(f^\st(\lam) = \sup_{x} \lam x - f(x)\). See the fold for details.

The calculations above yield

Note that even though \(f\) had infinite values, \(f^\st\) is finite everywhere. Now let us compute the double dual \(f^{\st\st}(x) = \sup_{\lam} \lam x - f^\st(\lam)\). Once again, see the fold for details.

Thus, \(f = f^{\st\st}\) everywhere, even outside \(\dom f = [-1, 1]\).

An approximation argument

It is slightly annoying to have to assume \(x_0 \in \dom f\) to get the double dual conclusion above, why can we not have \(f^{\st\st}(x_0) = +\infty\) if \(x_0 \not\in \dom f\)? The example above also suggests the possibility of such an improvement. The answer is that we can, for which we will now discuss a very beautiful approximation argument.

The key is the following:

if \(f \leq g\), then \(f^{\st\st} \leq g^{\st\st}\).

Thus, if we can approximate \(f\) by an increasing sequence \(f_k \to f\) pointwise such that \(\dom f_k = \R^n\) for each \(k\), then the following two facts complete the proof: -- \(f_k^{\st\st} = f_k\) for each \(k\), with no qualification on the argument \(x\). -- Since \(f_k^{\st\st}(x) \leq f^{\st\st}(x)\) everywhere, if \(x \not\in \dom f\), then

The problem therefore reduces to simply constructing such an approximation. Consider the sequence of increasingly penalized functions (constructions of this type are very common in optimization, for example in the definition of the proximal operator):

It is immediately obvious that \(f_k \leq f_{k + 1} \leq f\) for all \(k\), and that \(f_k < \infty\) everywhere, as soon as \(f(x) < \infty\) for some \(x\), which we already assume via properness. We still need to prove a few more things:

- \(f_k\) are all convex.

- \(\epi f_k\) are all closed.

- \(f_k \to f\) pointwise.

Why is \(f_k\) convex? In general,

if \(g(x, y)\) is convex over \(x, y\), then the marginal optimization

$$ h(x) = \inf_y\; g(x, y), $$is convex over \(x\).

Why is \(\epi f_k\) closed? In fact, there is an alternate criterion for \(\epi f\) to be closed, which is simpler to check in this case:

\(f\) is lower semicontinuous (lsc) \(\iff\) \(\epi f\) is closed.

where lower semicontinuity of \(f\) means that for any \(t\), the set \(\{x : f(x) \leq t\}\) is closed. Equivalently, for any \(x\) and any sequence \(x_n \to x\), we have \(f(x) \leq \liminf_n f(x_n)\). This property goes rather well with the "averages fall below chords" property of convexity. The proofs of these facts may be located elsewhere, see for instance ProofWiki.

In particular, if \(f_k\) is continuous then it is lsc, and then \(\epi f_k\) is closed. We will show the stronger property that \(f_k\) is \(k\)-Lipschitz.

It remains to show that \(f_k \to f\) pointwise. This should be rather intuitive, but we include the proof in the fold below.

Thus, we finally achieve the following result:

If \(f\) is proper closed convex, i.e., lsc and not identically \(+\infty\), then \(f = f^{\st\st}\) everywhere.

Duality under local assumptions

Although the result above assumes lsc globally, lsc can also be a local property, which is easiest to define by adapting the sequential definition:

\(f\) is lsc at \(x\) if for any sequence \(x_n \to x\), we have \(f(x) \leq \liminf_n f(x_n)\).

Given this local criterion, in this section we ask for a local version of the previous result. That is, can we assume lsc at one point, say \(0\), and achieve \(f^{\st\st}(0) = f(0)\)? To answer this, we begin with a construction.

Suppose \(f\) is a general convex function, not assumed to be lsc anywhere yet. Then \(\epi f\) is not necessarily closed, but it is convex. Consequently, as discussed above, the topological closure \(\cl{\epi f}\) is closed convex. But is it the epigraph of some function? Yes!

For a convex function \(f\), there exists a lsc convex function \(\cl{f}\) (called the closure of \(f\)) such that \(\cl{f} \leq f\) and \(\epi \cl{f} = \cl{\epi f}\). In particular, if \(f(x) < \infty\) for some \(x\), \(\cl{f}\) is proper convex. It also has the following properties:

- If \(f\) is lsc at \(x\), then \(\cl{f}(x) = f(x)\), even if \(f(x) = +\infty\).

- If \(g \leq f\) is a lsc function, then \(g \leq \cl{f}\).

The discussion should feel reminiscent of our prior discussions on closures of convex sets.

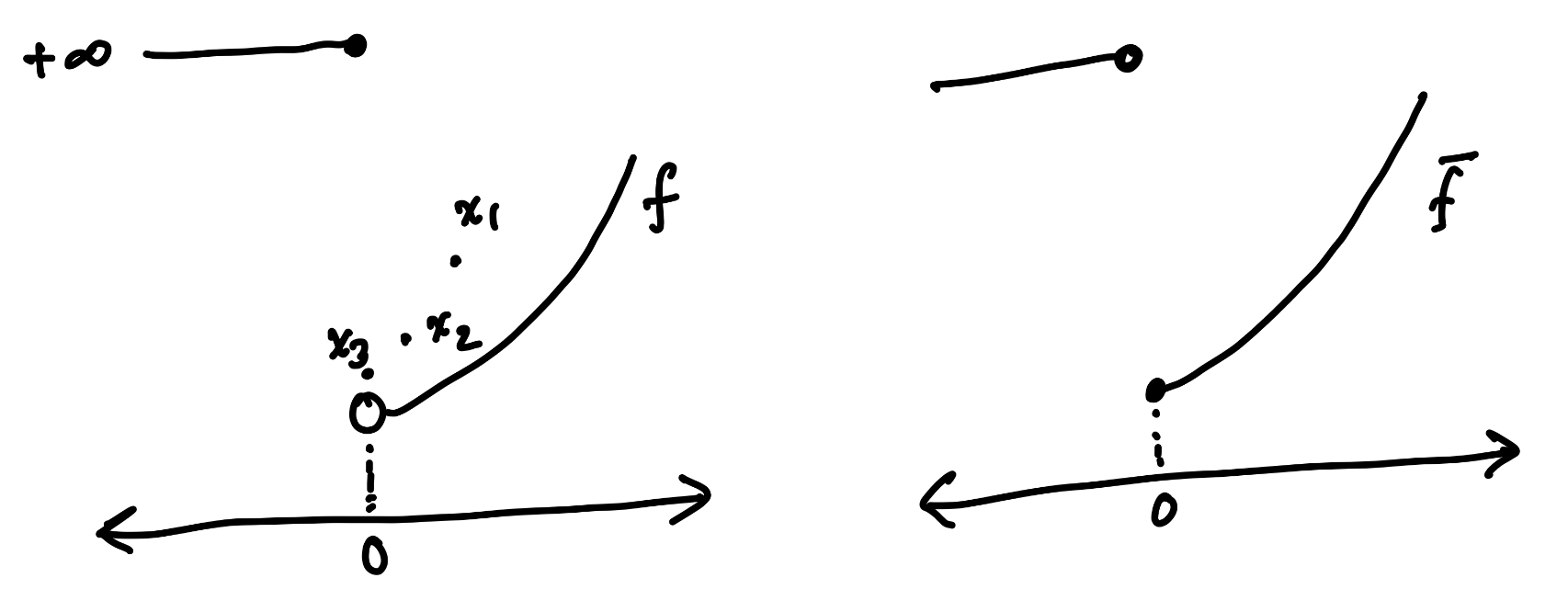

A weird convex function \(f\) (left) that is not lsc at 0, but instead has \(f(0) = +\infty\) (the open circle indicates that the graph does not continuously extend to that point). The offending sequence is illustrated as \(x_1, x_2, x_3, \ldots\) approaching 0. If it were lsc, \(f(0)\) would be smaller than the limit of \(f(x_n)\), but it is infinite instead. The closure \(\cl{f}\) (right) repairs this defect simply by making \(f(0)\) finite. Note that \(\cl{f} \leq f\), with the only strict inequality at \(0\).

Note that \(f^{\st\st}\) is always lsc, since it is a supremum of linear functions, due to the following simple fact:

The pointwise supremum of lsc functions are lsc.

Thus, \(f^{\st\st} \leq \cl{f}\), by property 2 above. However, since \(\cl{f}\) is proper convex, \(\cl{f}^{\st\st} = \cl{f}\) and since \(\cl{f} \leq f\), we have \(\cl{f} = \cl{f}^{\st\st} \leq f^{\st\st}\) (by monotonicity). This leads us to the beautiful conclusion strengthing the previous result:

If a convex \(f\) is finite at some point, then \(f^{\st\st} = \cl{f}\) everywhere.

Invoking the first property of \(\cl{f}\) above, we get the following local version of the double dual result:

If a convex \(f\) is lsc at \(x\), then \(f^{\st\st}(x) = f(x)\).

Summary thus far

It is a good time to summarize the results we have so far.

If \(K\) is a convex set, then the intersection of all halfspaces containing \(K\) is the closure \(\cl{K}\) of \(K\), that is, $$ \cl{K} = \bigcap_{\lam, t : K \subseteq H(\lam; t)} H(\lam; t). $$ An useful special case is when \(K\) itself is closed, i.e., \(\cl{K} = K\).

If \(f\) is a function, then we can define the Fenchel conjugate/dual \(f^\st\) of \(f\) as $$ f^\st(\lam) = \sup_{x \in \R^n}\ \Inn{\lam, x} - f(x). $$ This is always a lower semicontinuous convex function (even if \(f\) is neither), due to it being a supremum of linear functions. Lower semicontinuity of \(f\) is equivalent to \(\epi f\) being closed.

Fenchel duals are reverse monotone, that is \(f \leq g\) implies \(f^\st \geq g^\st\). Thus, \(f^{\st\st} \leq g^{\st\st}\) as well.

If \(f\) is proper convex, i.e., lsc and not identically \(+\infty\), then \(f = f^{\st\st}\) everywhere.

More generally, if \(f\) is convex and finite at some point, then \(f^{\st\st} = \cl{f}\) everywhere, where \(\cl{f}\) is the closure of \(f\).

If \(f\) is convex and lower semicontinuous at a given point \(x\), then \(f^{\st\st}(x) = f(x)\). This is true even if \(f(x) = +\infty\).

Constrained optimization

Consider the following problem. Given convex functions \(f\) and \(g\), how can we compute \(\inf_x f(x)\) subject to \(g(x) \leq 0\)? Let us also assume that \(g\) is never \(+\infty\). These are referred to as constrained optimization problems, and at their core, ask the question of how these two convex functions interact.

A really nice fact that allows us to relate this to our machinery is the following observation, which says that perturbing the constraint \(g(x) \leq 0\) moves the answer in a convex fashion:

The function

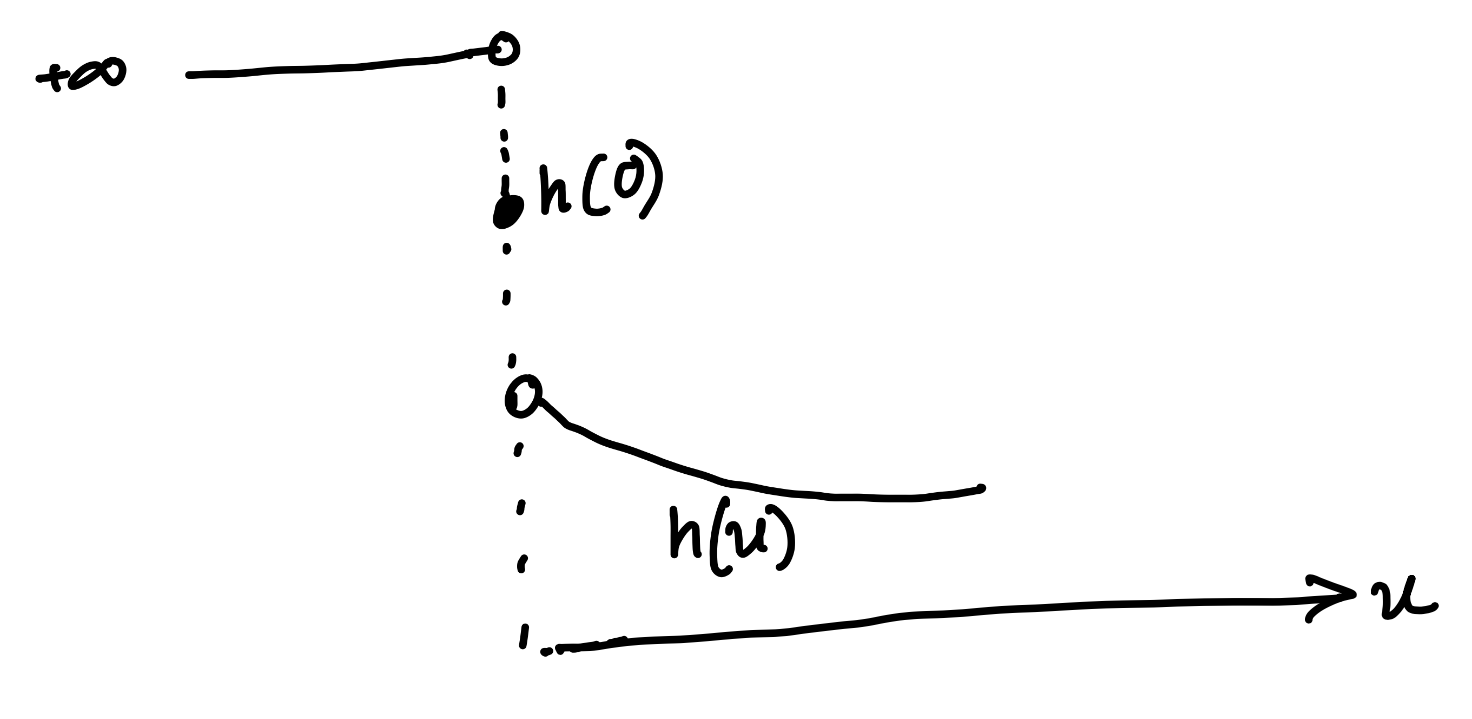

$$ h(u) = \inf_{x : g(x) \leq u} f(x), \quad u \in \R, $$is convex.

This is simply because it is the marginal optimization over \(x\) of the convex function \(\tilde{h}(x, u) = f(x) + \del_{\Rm}(g(x) - u)\), where \(\Rm = (-\infty, 0]\). For brevity, we will write \(\tg(x, u) = \del_{\Rm}(g(x) - u)\).

Remark: Note that if \(h(0) = -\infty\), strong duality holds trivially due to weak duality, and we omit this case below.

Let us compute the Fenchel conjugate of \(h\). For \(\lam \in \R\), we have

This is a very interesting computation. For fixed \(x, \lam\), let us focus on the counter-term

The term \(\tg(x, u)\) forces us to choose \(u \geq g(x)\).

- If \(\lam = 0\), clearly any choice of \(u \geq g(x)\) yields that the supremum is 0.

- If \(\lam > 0\), send \(u \to \infty\) to get an infinite value, so the supremum is \(+\infty\).

- If \(\lam < 0\), \(\lam u\) is decreasing. So the supremum happens at \(u = g(x)\), and is \(\lam g(x)\).

Therefore, we conclude

Framing this in a slightly different way, we have

which one may recognize as the Lagrangian dual of the original problem. Before discussing interpretations, let us first finish a blind computation of the double dual \(h^{\st\st}\).

From the definition \(h^{\st\st}(u) = \sup_{\lam} \lam u - h^\st(\lam)\), it is clear that there is no point to choosing \(\lam > 0\). Since we expect this to equal \(h(u)\), of which we are only interested in the case \(u = 0\), let us compute

Our prior results now appear extremely strong. Since \(h\) is convex, as soon as \(h\) is lsc at \(0\), we will have \(h(0) = h^{\st\st}(0)\), and thus

It should be clear that the same result would hold, with suitable modifications, if instead of one constraint \(g(x) \leq 0\), we had multiple constraints \(g_i(x) \leq 0\) for \(i = 1, \dots, m\). This is precisely the usual statement of strong duality for convex optimization problems. Note that the left-hand side is always \(\geq\) the right-hand side, which follows from \(h \geq h^{\st\st}\), and is called weak duality.

We point out that in the case when there are multiple constraints \(g_1, \ldots, g_m\) all \(\leq 0\), the same analysis will reveal that \(h^\st(\lam) = +\infty\) unless \(\lam \leq 0\) coordinatewise. Thus, everything continues to hold with \(\lam \gtreqless 0\) interpreted coordinatewise.

Lower semicontinuity at \(0\)

It would appear that we are all set to conclude strong duality for our constrained optimization, modulo a seemingly weird requirement, i.e., that \(h\) is lsc at \(0\). What does this even mean?

If \(h\) were not lsc at \(0\), we would have an \(\eps > 0\) and a sequence \(u_n \to 0\) such that

In particular, it is possible to find arbitrarily small \(u\) such that

that is, the optimal value suddenly drops by at least \(\eps\) when we relax the constraint from $g(x) \leq 0$ to \(g(x) \leq u\), suggesting that \(\{x : g(x) \leq 0\}\) lies on the threshold of a nontrivial improvement.

One possible shape of \(h\) when it is not lsc at \(0\). \(h(0)\) is still finite. You should check geometrically that \(h\) is convex. One naturally wonders if \(h(u)\) can be finite for some \(u < 0\) while still looking like this for \(u \geq 0\)? Continue reading.

Note that any such \(u\) must be positive. Consider the sublevel set \(F = \{x : f(x) \leq h(0) - \eps/2\}\). This is a closed convex set, (assuming \(f\) is lsc) and does not intersect \(G_0\), where we also define the (increasing in \(u\)) closed convex (assuming lsc \(g\)) sublevel sets

But there are arbitrarily small \(u\) such that \(F\) intersects \(G_u\), even though \(G_u\) decrease to \(G_0\) as \(u \to 0\) and \(F \cap G_0 = \empty\). Is this possible?

Topologically atleast, yes. For instance, suppose \(G_u = [1/u, \infty)\) and \(F = [0, \infty)\), all closed. Then \(G_0 = \empty\) and \(F \cap G_0 = \empty\).

However, suppose there is \(u_0 > 0\) such that \(G_{u_0}\) is compact (for instance, think of \(g(x) = \Norm{x}_2^2 - 1\), which yields the compact sublevel set \(G_{1} = \Norm{x}_2^2 \leq 2\)). Then, each set \(G_u\) is compact if \(u < u_0\), and so is \(F \cap G_u\). Therefore, by Cantor's intersection theorem, we have that \(F \cap G_0 = \bigcap_{u < u_0} F \cap G_u\) is nonempty. We obtain our first sufficient condition for strong duality:

If \(f, g\) are proper convex, and if for some \(u > 0\), \(\{x : g(x) \leq u\}\) is compact, then strong duality holds.

Essentially, in this setting, you can forget about the rest of the space, so that the problem is over a compact domain \(G_u\); this has been recorded elsewhere, see for instance Estrin and Friedlander (2020), Proposition 6.1b.

In the case of multiple constraints, it turns out that we only require one of the constraints to have a compact sublevel set for some level \(u > 0\). Intuitively, this already restricts the problem to a compact domain; the details are included in the fold.

Slater's condition

The picture in the previous section should raise a natural question. Can \(h(u)\) be finite for some \(u < 0\) while still having \(h(0)\) be non-lsc? It should be geometrically clear that this is not possible, and an offending chord will always be present. Believing this, what does \(h(u)\) being finite for some \(u < 0\) even mean? It simply means that \(u < 0\) is feasible, that is, there is some \(x\) such that \(g(x) \leq u < 0\) and \(f(x) < +\infty\). This is precisely the content of Slater's condition, which we now proceed to explain and derive rigorously.

Note that the argument for strong duality under compactness above did not really use convexity in any form, and as a result, the condition, compactness, is somewhat nontrivial to check for general \(g\).

Let us return back to the original question: when can we rule out the existence of sequences \(u^n \to 0\) such that \(h(u^n) < h(0) - \eps\) for some \(\eps > 0\)? The existence of such a sequence suggests that there are near-optimizers \(x_n\) such that

- \(f(x_n) < \inf_{x : g(x) \leq 0} f(x) - \eps/2\) for all \(n\),

- \(g(x_n) \to 0\).

The second point is due to the observation that all such \(x\) must have \(g(x_n) > 0\). Therefore, replacing \(\eps/2\) by \(\eps\) we have the following:

For each \(x_0\) with \(g(x_0) \leq 0\) we have \(f(x_n) < f(x_0) - \eps\) and \(g(x_n) \to 0\).

How close can we push the point \(x_0\) to the best possible value of \(f\)? Note that any intermediate point between \(x_0\) and \(x_n\) must improve \(f\) due to convexity.

Fix \(x_0\) and write \(g(x_0) = -\al\). Then, defining

we have

Thus, the point \((1 - \t) x_0 + \t x_n\) is a feasible point for the original problem, implying

Rearranging, we get

Now comes the crucial part. Assume \(f(x_0) < +\infty\) and \(\al > 0\), i.e., \(g(x_0) < 0\). Then \(\t \to 1\) as \(n \to \infty\), and thus the right-hand side goes to \(-\infty\), a contradiction. Note that this argument just enacted the intuition at the beginning of this section suggesting that an offending chord willl always be present if \(h\) is not lsc at \(0\).

Essentially this says that if there is \(x_0\) with \(g(x_0) < 0\), you can construct a nontrivial convex combination with \(x_n\) to get a feasible point that inherits the improvement of \(x_n\) over \(h(0)\) to a certain degree. This improvement is not spoiled by any value of \(f(x_0)\) as long as it is finite, since \(\t \to 1\) on account of \(g(x_n) \to 0\).

We obtain Slater's condition for strong duality:

For convex \(f, g\), if there is some \(x_0\) such that \(g(x_0) < 0\) and \(f(x_0) < +\infty\), then strong duality holds, i.e.,

$$ \inf_{x : g(x) \leq 0} f(x) = \sup_{\lam \geq 0} \Box{\inf_x f(x) + \lam g(x)}. $$

An alternate view

All the work in the last three sections allowed us to conclude that Slater's condition is essentially a way to ensure the lower-semicontinuity of the optimization problem as we relax the constraint. Now let us again look at our computations, but from the perhaps easier perspective of convex sets.

The definition of \(h(u)\) can be equivalently written via its epigraph as

The convexity of \(\epi h\), and thus of \(h\), is rather self-evident now. Put this way, we see that the boundary of \(\epi h\) is the Pareto frontier of the dual objectives \(g\) and \(f\).

Then, the intersection of all halfspaces containing \(\epi h\) is the closure \(\cl{\epi h}\). It may be quickly checked that \(h\) being lower semicontinuous at \(0\) is equivalent to \(\cl{\epi h}\) containing no point of the form \((0, t)\) with \(t < h(0)\). We will come back to this later. For now, let us fix a certain slope \((\lam_1, \lam_2) \in \R^2\) and determine the tightest intercept \(c\) such that \(\{(u, t) : \lam_1 u + \lam_2 t \leq c\} \supseteq \epi h\).

We saw a similar analysis earlier, so we will not repeat it here, and instead will be somewhat loose in our arguments here. Let us just restrict to the interesting case \(\lam_2 = -1\), and call \(\lam = \lam_1\) for brevity. The condition on this halfspace is to contain \(\epi h\), that is

How can we compute the smallest such \(c\)? Note that \(\lam > 0\) is not possible if \(c < \infty\). If \(\lam = 0\), the condition asks for \(c \geq -t\) (or \(-c \leq t\)) whenever there is some \(x\) with \(f(x) \leq t\), for which the tightest choice is \(-c = \inf_x f(x)\). This is also consistent with the intuition that \(\lam = 0\) corresponds to flat halfspaces, among which the best supporter of \(\epi h\) is \(t = \inf_x f(x)\).

If \(\lam < 0\) however, the situation is more interesting. The condition on \(c\) may be rewritten as

Clearly, there is no point is choosing \(u\) larger than \(g(x)\) or \(t\) larger than \(f(x)\), so this is equivalent to

This is an alternate way to see the birth of the Lagrangian dual, which was computed earlier via a more algebraic manipulation. In particular, \(-\lam\) is called the Lagrange multiplier of \(g\) (the minus sign is just a consequence of our conventions and should not be bothered with too much). The choice of the slope of \(\lam\) sorts the Pareto frontier according to \((-\lam) g(x) + f(x)\) and chooses the best one with this tradeoff.

Call this optimal \(c\) as \(c_\lam\). Note that, by definition, the intercept \(-c\) is the point where the halfspace crosses \(u = 0\), and thus, strong duality at \(0\) is equivalent to \(\sup_\lam -c_\lam = h(0)\), that is

an expression that was also derived earlier, except with \(-\lam\) renamed as \(\lam\).

In this formulation it is also simple to see why the lower semicontinuity of \(h\) at \(0\) is important. If there is a sequence \(u_n \to 0\) such that \(h(u_n) < h(0) - \eps\), any hyperplane supporting \(\epi h\) is basically "blocked" by points \((u_n, h(u_n))\).

Slater's condition is also clear. If \(g(x_0) < 0\) for some \(x_0\) with \(f(x_0) < +\infty\), then \(\epi f\) contains some finite point \((u, t)\) with \(u < 0\). The segment from \((u, t)\) to \((u_n, h(u_n))\) is contained in \(\epi h\), whose value at \(u = 0\) gets closer and closer to \(h(0) - \eps\) (under the assumption that lsc fails at \(0\)). This produces a contradiction.

Finiteness of the multiplier

The last question we wish to discuss in these notes is the following: when is the Lagrange multiplier \(\lam\) finite? Note that the statement of strong duality does not assert that the supremum over \(\lam\) is attained, but it will be useful to know when it is.

Since the Lagrange multiplier for the constrained optimization problem is essentially (up to sign), the dual variable \(\lam\) in the Fenchel conjugate of \(h\), we will work in the generality of Fenchel duals. To that end, let us return to the statement of strong duality for Fenchel conjugates, which we restate here:

If \(f\) is proper convex, then for each \(x\),

$$ f(x) = \sup_{\lam}\; \Inn{\lam, x} - f^\st(\lam). $$

It is useful to introduce the idea of subgradients of \(f\) at \(x\).

A vector \(\lam\) is a subgradient of \(f\) at \(x\) if for all \(y\),

$$ f(y) \geq f(x) + \Inn{\lam, y - x}. $$The set of all subgradients of \(f\) at \(x\) is called the subdifferential of \(f\) at \(x\), and is denoted by \(\partial f(x)\).

If \(f\) is convex and differentiable at \(x\), then \(\partial f(x)\) is a singleton containing the gradient \(\nabla f(x)\).

The canonical example of subgradients with nondifferentiable \(f\) is the absolute value function \(f(x) = |x|\). It may be seen that \(\partial f(0) = [-1, 1]\), a convex set. It may be shown rather easily that the subdifferential is always convex set.

Now suppose a finite \(\lam\) attains the supremum in the dual expression for \(f(x)\), that is

Then, since \(f^\st(\lam) = \sup_y \Inn{\lam, y} - f(y)\), we have for any \(y\),

that is, \(\lam\) is a subgradient of \(f\) at \(x\). Conversely, if \(\lam\) is a subgradient of \(f\) at \(x\), then

The right hand side holds for all \(y\), and thus

Consequently,

The supremum in the dual expression for \(f(x)\) is attained at \(\lam\) if and only if \(\lam\) is a subgradient of \(f\) at \(x\).

This immediately tells us that if \(f\) is convex and differentiable at \(x\), then the supremum in the dual expression happens at the unique subgradient \(\grad f(x)\), and thus is attained at a finite \(\lam\).

The benefit of the prior analysis is that the question of dual attainment is reduced to a problem involving only the primal function \(f\), and not its dual \(f^\st\) which may be more complicated to analyze.

The intepretation of subgradient is that \(\lam\) is a subgradient of \(f\) at \(x\) if the linear function \(\Inn{\lam, y - x}\) is a lower bound on the improvement of \(f\) from \(x\) to \(y\).

Here is an interesting example:

Of course \(\partial f(x) = \{-\fr{1}{2\sqrt{x}}\}\) for \(x > 0\), the gradient. The problematic case is \(x = 0\). No finite \(\lam\) can be a subgradient, since the slope at \(0\) is infinite, and thus \(\partial f(0) = \empty\).

This cannot happen if \(x\) is in the interior of the domain of \(f\).

Note that Slater's condition also implies that \(0\) is in the interior of the domain of \(h\), since if \(h\) is finite at some \(u_0\) (and Slater asserts this for some \(u_0 < 0\)), it will automatically be finite for all \(u > u_0\).

Therefore, under Slater's condition, not only does strong duality hold, but also the corresponding Lagrange multiplier is finite and the dual supremum is attained.

This is another reason why Slater's condition is preferred to the compactness condition.

P.S.: Some of these statements can be strengthed by considering relative interiors, which are essentially interiors when restricted to the hyperplane containing a set \(K\). Most results pass to these versions simply by applying the corresponding result with that hyperplane as the universe, but we do not discuss this here for simplicity.